About us

CausaliT was born from a vision: to bring about a new paradigm of AI that goes beyond the current limitations of relying on correlation rather than causation.

Current statistical and experimental tools test associations within complex systems making deep understanding of complex problems, such as those often faced in the medical domain, nearly impossible. We integrate proprietary Causal AI with savant Language Models (sLM) for creating tools that improve outcomes and expand availability of AI in complex model domains. Our models don't just predict; they reason.

We are funded by the National Science Foundation (NSF) to help bring in the next generation of AI.

Most of today's AI systems, such as large language models, work by predicting the next most likely answer based on statistical correlation. While powerful these approaches can produce unreliable results and lack the ability to explain their reasoning which limits usage in high-risk scenarios such as healthcare. CausaliT's technology takes a fundamentally different approach by integrating causal reasoning directly into AI models.

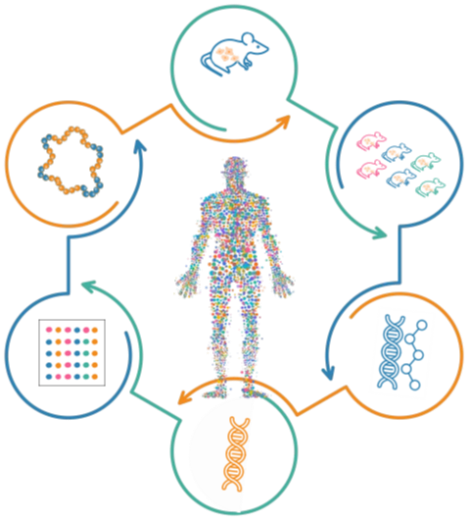

Our platform uses a two-stage process: first, it builds a structured map of cause-and-effect relationships from diverse health data sources, then it trains a specialized, compact model, what we call a Savant Language Model (sLM), to reason over those relationships. Because these models are built around explicit causal knowledge, they can explain why they reached a given conclusion, not just what they predicted. Because these sLM are smaller and more focused than general-purpose AI, they're capable of running on local devices, from clinical workstations to personal health hardware, without relying on cloud connectivity.

That means faster responses, lower costs, stronger data privacy, and AI that works even in resource-limited settings. CausaliT is bringing trustworthy, explainable AI to the places where it matters most.

CausaliT has been awarded a National Science Foundation SBIR Phase I grant to advance its causally-aware, edge-deployable AI systems, and holds a robust patent portfolio (Docket C378-0002USP1) covering its core technology for deploying causal AI at the edge.